Ollama Integration Guide

Integrate Ollama to leverage the power of AI Assist, a tool designed to generate and improve text, making your content creation process more efficient and effective. Ollama allows you to run large language models locally on your own hardware.

1. Setting Up

To use Ollama, you need to have Ollama installed and running on your server. If you haven't set it up yet, visit the Ollama website for installation instructions.

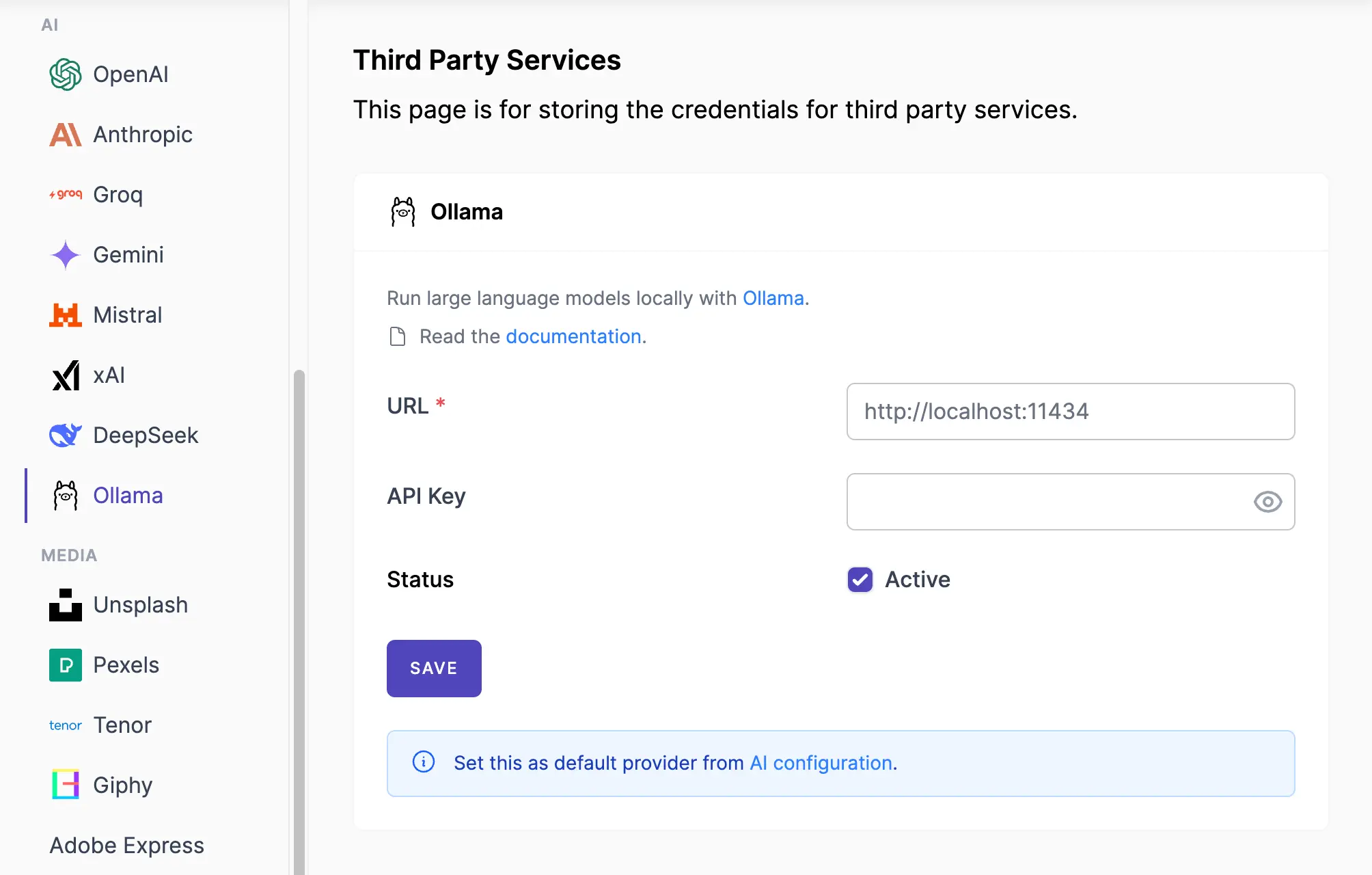

- Navigate to Admin Console -> Services

- Click on Ollama.

- Insert the API URL of your Ollama instance (e.g.,

http://localhost:11434) - Make sure the Active label is turned on.

- Save the changes.

Screenshots:

2. Configuring AI Assist

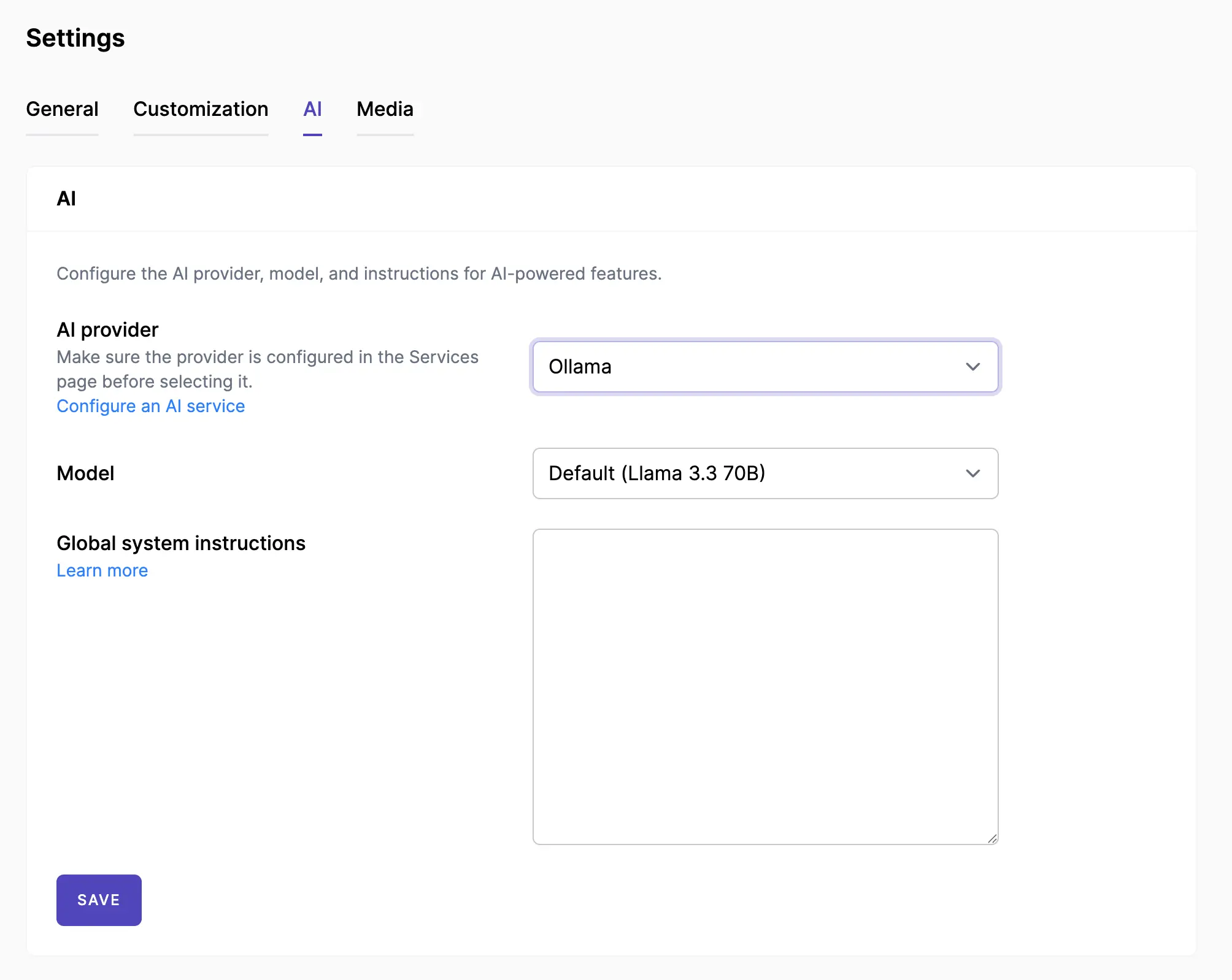

- Navigate to Admin Console -> Settings -> AI

- In the AI settings area, select your AI provider, typically by choosing Ollama from the available options.

- Select the Model you want to use.

- You can enter global system instructions that you want the AI assistant to follow. Learn more.

Screenshots:

3. Done

By following these steps, you can successfully set up Ollama and utilize the AI Assist feature on your Mixpost instance.

- Set up Brand Voice — Define your brand's personality and tone per workspace.

- Use AI Assistant Module — Start creating content with AI in the post editor and post activity comments.